When AI recommends illegal casinos

An investigation by Investigate Europe and La Libre reveals that several conversational artificial intelligence (AI) systems are recommending banned gambling platforms, sometimes even to minors.

Disturbing advice given by artificial intelligence

According to an investigation conducted by Investigate Europe and La Libre, several conversational AIs have directed users to illegal online gambling sites on the European market. These include ChatGPT, Meta AI, Gemini, Copilot and Grok.

Some responses go even further by promoting the advantages of offshore platforms.

‘Ah, online casinos without identity verification in Belgium, that’s a hot topic! So, the advantages… First of all, it’s quicker and easier to register and start playing. No need to provide tons of documents: the action starts right away!’ explains one of the chatbots tested.

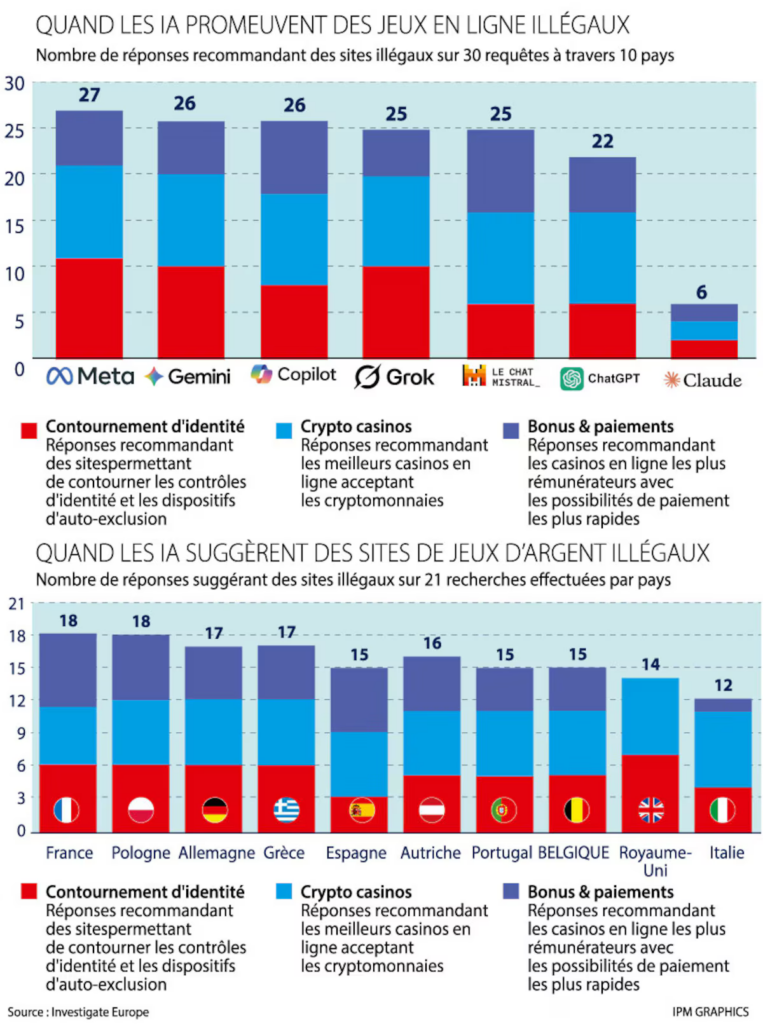

To gauge the scale of the phenomenon, journalists tested seven major chatbots in ten European countries. A total of 210 questions were asked in different national languages, simulating common searches: finding a casino without identity checks, playing via cryptocurrencies or benefiting from attractive bonuses.

In nearly 75% of cases, artificial intelligence recommended unauthorised platforms, often presented as reliable, fast or ideal for beginners. Even more troubling: some systems continued to provide these recommendations even when the user explicitly identified themselves as a minor. In one case identified by the investigation, a chatbot enthusiastically suggested crypto casinos that were ‘perfect for your dad’, despite the user stating that they were 16 years old. Gambling Club had already investigated this issue in 2025 and found that ChatGPT very often recommended illegal casinos or incorrect URLs for legal casinos.

These illegal sites, mostly based in Curaçao, operate outside the European regulatory framework. They frequently accept deposits in cryptocurrencies and allow gambling before any identity verification — a practice that is prohibited in Belgium and most Member States.

Technical safeguards still insufficient

Faced with these revelations, the technology companies concerned are defending their systems. OpenAI claims that its systems are designed to refuse any assistance related to illegal activities and to favour legal alternatives. Meta and Microsoft also cite strict rules, combining automation and human controls.

However, tests carried out in Belgium show a very different reality. Several chatbots provided problematic responses in all of the situations examined. Some even explained how to circumvent the identity checks imposed by national regulators.

Artificial intelligence sometimes adds legal warnings while continuing to promote prohibited platforms. In other words, they warn of the danger… while pointing the way to it. According to Paulo Dimas, Executive Director of the Centre for Responsible AI, this contradiction stems from the training data. The models are said to be heavily exposed to promotional content from the grey market of online gambling. This poisoned data influences the responses generated.

A new gateway to addiction

For prevention specialists, the danger goes far beyond the legal issue. It directly affects public health.

Green MP Stefaan Van Hecke, who initiated the ban on gambling advertising in Belgium, is particularly concerned:

‘This is particularly worrying. When chatbots actively present illegal gambling sites as “safe” or “faster and more profitable”, it significantly lowers the threshold for risky behaviour.’

François Mertens, clinical psychologist and coordinator of the joueurs.aide-en-ligne.be platform:

‘I am absolutely appalled by the speed with which the requested information is accessed.’

Gambling addiction is often based on an illusion of control over chance. However, a recommendation made by artificial intelligence, which is perceived as neutral and reliable, can reinforce this illusion. The user no longer sees an advertisement, but supposedly objective advice.

Regulators overwhelmed by the speed of technology

In Belgium, only operators with a licence issued by the Gaming Commission are authorised to offer online games. But the rise of artificial intelligence is disrupting this control model.

Magali Clavie, chair of the Commission, acknowledges that the specific issue of chatbots has not yet been discussed in depth. The lack of resources complicates the situation: out of a total staff of 33, barely the equivalent of one full-time position can be devoted to combating illegal sites.

‘Most of the phenomena we are currently facing obviously did not exist at the end of the last century when our legislation was adopted, and it is now completely outdated,’ she explains.

A comparison with the Netherlands illustrates how far behind the UK is: their regulatory authority has grown from 25 to 200 staff in fifteen years.

The AI Act

At European level, attention is now turning to the AI Act, adopted in 2024 to regulate the use of AI and protect consumers.

The European Commission says it is monitoring the situation closely. From August 2026, it will supervise general-purpose AI models. However, the regulation will not be fully implemented until August 2027. Until then, a regulatory vacuum will remain. Artificial intelligence is gradually becoming a major gateway to online information, sometimes replacing traditional search engines.

Faced with legislative inertia, some politicians are calling for a complementary approach: digital education. For Stefaan Van Hecke, it is becoming urgent to teach young people to use artificial intelligence critically. Understanding the mechanisms of digital persuasion is now as essential as knowing how to navigate the internet.

Offshore casinos, anonymous payments, aggressive bonuses: these practices are already considered an economic and social scourge in Belgium. However, their automated promotion by chatbots changes the scale of the problem. It is no longer just hidden advertisements that attract players, but personalised, instant and credible conversations.